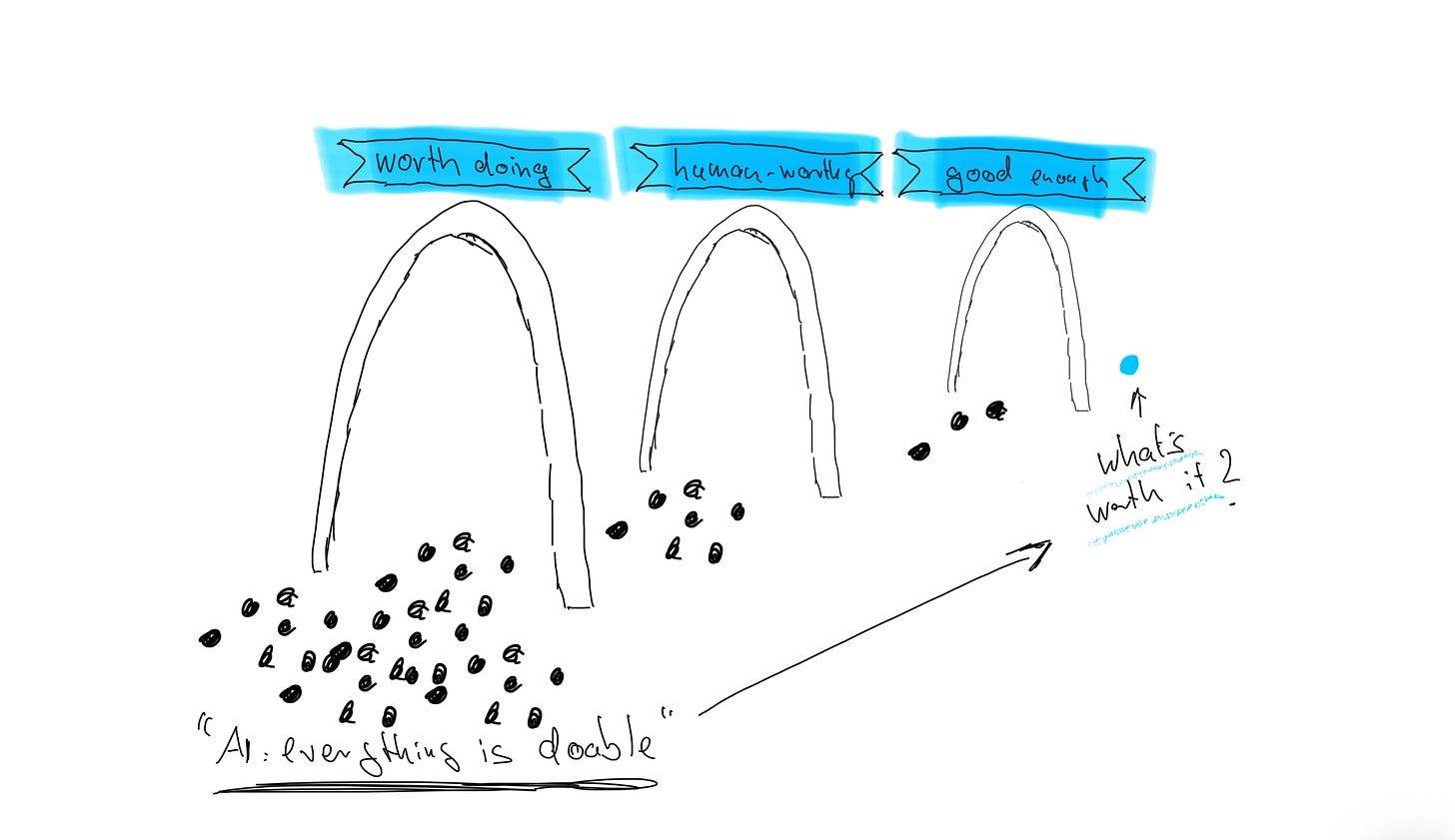

AI removed the constraint. Now it's yours to set.

What's worth doing now that everything is doable?

Every major productivity tool makes the same promise: do the same work in less time. Every one delivers the same surprise: we don’t do less. We do more. Coal engines got efficient, and we burned more coal. Email replaced faxes, and communication volume exploded. The economist Robert Solow saw it in the 1980s: “You can see the computer age everywhere except in the productivity statistics.”

Nearly forty years later, the pattern is back.

Berkeley researchers tracked 200 employees for eight months after AI adoption. People worked faster, broader, longer, and voluntarily. Nobody asked them to. The tool removed the constraint, and people didn’t replace the constraint with judgment.

I think Solow’s paradox was never really about computers or about economics. It was about what humans do when efficiency arrives without clarity. We don’t use the gains to do less. We use them to do more. Unless something forces us to ask: more of what?

Three constraints worth setting

AI multiplies everything, including your lack of clarity. If you know what matters, AI is leverage. If you don’t, it allows you to accelerate without knowing where you are going.

The instinct, when everything becomes possible, is to do more. The discipline is to do less deliberately, at least at three levels:

1) Direction: is this worth doing at all?

AI makes everything doable. That doesn’t make everything worth doing. Without a filter for what matters, you end up busy in a way that looks productive and feels empty.

This is the most basic constraint, and also the one most skip. They jump to how: how to prompt, how to automate, how to integrate - without settling whether. The first question isn’t “how can AI help me do this?” It’s “should this be done at all?”

2) Standards: is this done, or am I offloading unfinished thinking?

When AI makes producing easy, defining “done” becomes the harder discipline. There’s always one more iteration, one more polish, one more angle. For the perfectionist, AI is a trap: it makes the gap between “good” and “perfect” feel closeable, so you never stop.

But the cost isn’t just yours. Researchers at Stanford and BetterUp coined a term for what happens when people produce without a quality constraint: “workslop”, i.e. AI-generated output that looks polished but offloads cognitive work onto the recipient. Their study found that 40% of workers had received workslop in the past month. Half of them viewed the sender as less capable and reliable afterward. A separate study found that two-thirds of workers spend up to six hours a week correcting workslop-related errors.

When you produce without a standard for “done,” you’re exporting your lack of clarity to everyone around you. Setting a constraint on quality is also an act of respect for the people downstream of your work.

3) Meaning: should a human do this, even if AI could?

This is the level of constraint we talk about too little, and the one that I think matters most.

Some things are worth doing precisely because they require effort. Writing a personal note by hand. Thinking through a difficult decision slowly, without outsourcing the reasoning. Coaching someone through a struggle in real time. The value in these acts isn’t in the output. It’s in the attention, the care, the sacrifice of time.

When you delegate these to AI, yes, you ‘save time’. But you’re also hollowing out the experience that made the work meaningful: the fun of figuring something out, the connection that comes from someone knowing you spent time on them, the slow thinking that leads to something that surprises you.

Not everything that can be done, should be done. This constraint is about protecting the things that make work - and life - meaningful.

The constraint was removed (again). Now it’s yours to set.